The Inference ASIC Fork

Why GPU-First AI Economics Are Becoming a Structural Disadvantage.

By Armando Pereira | Founder, PVentures Consulting | Senior Member IEEE | Co-founder, OpenFog Consortium (IEEE 1934) | President, Autonomous Vehicle Computing Consortium | Former VP/GM Optical BU, Centillium Communications (CMOS PON SoC, NTT-qualified)

👋 Welcome to The Vector™

The Vector™ is the weekly directional deep dive for execs, founders, and investors operating in deep tech. Each issue tracks a single development, technology shift, regulatory move, or competitive realignment to its directional endpoint: where it is heading, how consequential it is, and what the next ninety days will force you to decide.

🎯 Why Now

In the five weeks preceding this issue, three separate signals confirmed that the economics of AI inference are fracturing from the economics of AI training.

Google announced a third-generation Tensor Processing Unit deployment at a scale that places its internal inference cost below the equivalent rate it charges external cloud customers.

Broadcom disclosed that custom ASIC revenue grew 45 percent year-over-year in its most recent fiscal quarter, against 16 percent for the GPU segment across the same reporting window.

Cognichip, a stealth-mode startup founded by Faraj Aalaei, who built Centillium Communications into a DSL silicon vendor before its acquisition, emerged publicly with a physics-informed AI system it claims compresses chip design cycles by more than 50 percent and reduces total design cost by approximately 75 percent. Lip-Bu Tan, who served on the Centillium board and now leads Intel as CEO, joined the Cognichip board on April 1, 2026.

Those three signals share a common denominator:

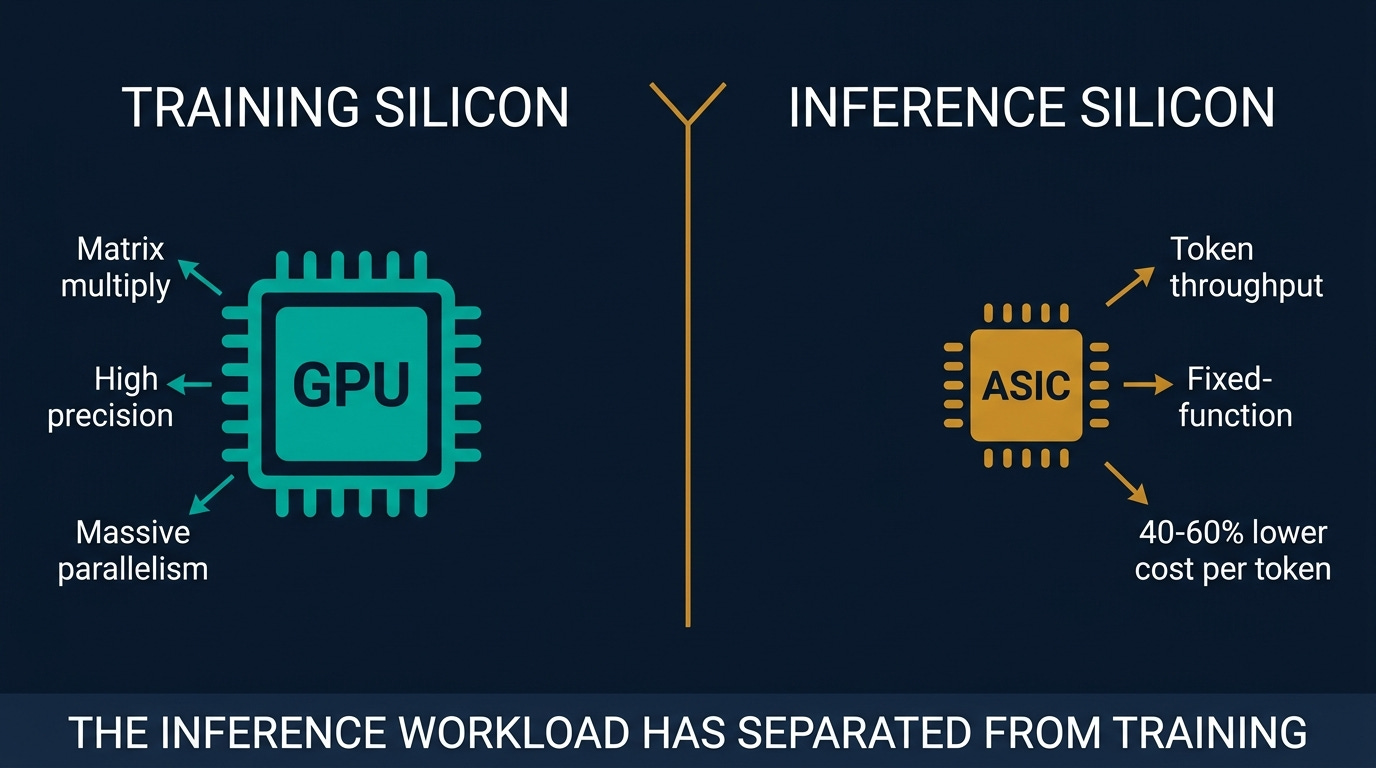

The inference workload is separating from the training workload at the silicon layer, and the economics of each are diverging.

NVIDIA has read the same signal and responded with NVLink Fusion, an architecture that lets third-party ASICs interconnect with NVIDIA components inside a single cluster, ensuring revenue participation regardless of which chip handles inference tokens.

The strategic question this issue pursues is not which chip wins. It is where your company sits on the inference stack, and the substitutability costs you incur at each layer when the economics compel a hardware decision.

🧭 The Thesis This Week

Consensus: GPU clusters are the default for both training and inference; the performance gap between general-purpose GPUs and custom silicon does not justify the design complexity for most enterprises.

The Vector™ position: The inference workload has separated from the training workload, and the economics of running at billion-token-per-day volumes are creating a structural cost disadvantage for GPU-first architectures that grows nonlinearly with scale.

Endpoint: By Q4 2027, custom silicon and ASICs handle 35 to 40 percent of production inference tokens; enterprises operating above 500 million tokens per day face a material silicon-strategy decision as a capex agenda item, not a software procurement question.

Grade: Within 90 days, Broadcom’s next earnings call either shows custom ASIC revenue growth exceeding 50 percent year-over-year, confirming accelerating enterprise adoption, or moderates toward GPU growth rates, validating the consensus GPU-first durability case.

📌 What Execs Should Do This Quarter

Map your inference token volume by workload type.

Generative text, image synthesis, and retrieval-augmented generation have materially different latency and throughput profiles. The ASIC value proposition concentrates on high-throughput, lower-latency workloads. Know which category your largest inference spend falls into before any vendor conversation.Audit GPU contract renewal windows now.

If a significant GPU contract renews within the next 12 months, that window is the evaluation window for introducing ASIC alternatives into the competitive brief. Waiting until post-renewal locks in another cycle at current rates.Add silicon strategy as a named line item on the technology radar.

The silicon decision is arriving as a capital question, not a vendor-selection question. Boards that first see it as a line item in the next refresh cycle will not have the context to govern it. A named item on the radar builds that context incrementally.Place Cognichip on a monitoring brief, not in the evaluation queue.

The company is pre-revenue, and the design-compression claims are unverified at production scale. But Faraj Aalaei has built and sold a couple of chip companies, and Lip-Bu Tan does not join boards as a courtesy. A monitoring position costs nothing and avoids being surprised by a validation announcement.

The full mechanism, vendor map, scenario probabilities, and board-ready exposure matrix are in the paid extension below.

🎯 Upgrade to Read the Full Analysis

If you are a CTO, head of infrastructure, board director, or technology investor making silicon and compute commitments that will carry your organization through the next three years, the paid extension delivers:

The four-layer inference stack mechanism,

The counter-argument from Goldman Sachs and Morgan Stanley,

A Jensen Huang expert’s view,

The named vendor landscape across eight public and private players,

Three strategic implications for the audience,

The Inference Stack Exposure Matrix framework,

five forward watch signals, and three scenario probabilities anchored to observable 90-day triggers, and

A public company’s financial snapshot for every name in this issue.

The Vector™ is built to be a permanent reference asset, not just a weekly read.